Voice Bots: A Dangerous Game of Misinformation

1 min read

AI for Software Engineering (Copilots, SDLC, Testing)

-/5

In short

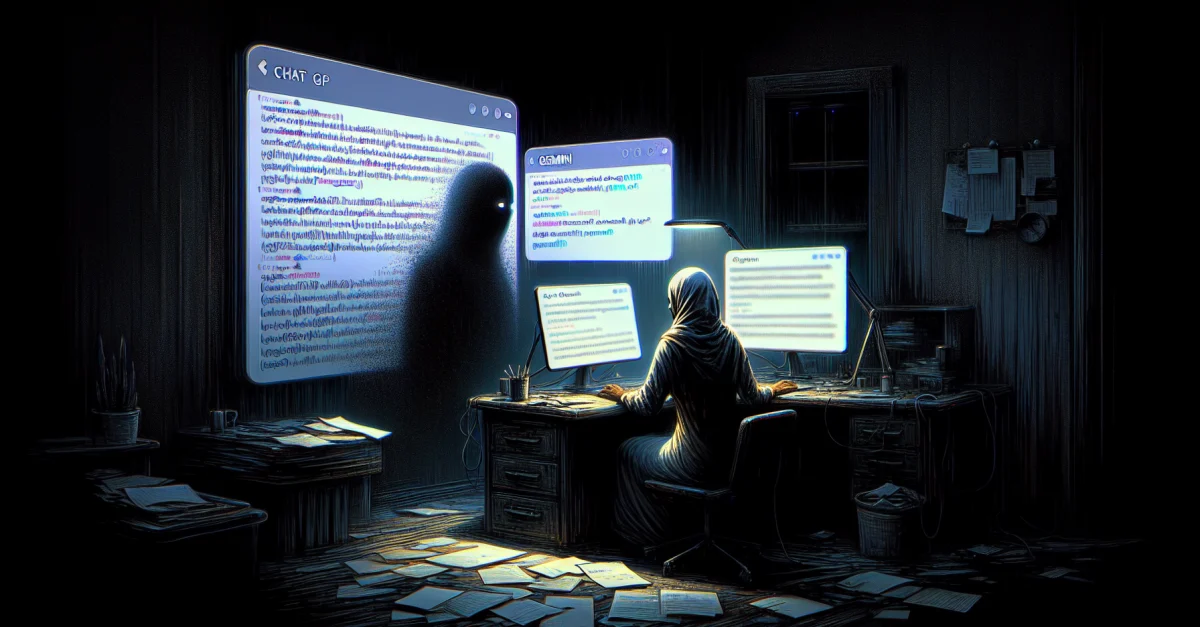

- Let’s be clear: ChatGPT and Gemini voice bots are failing us.

- They spread falsehoods up to 50% of the time.

- This isn’t just a minor flaw; it’s a fundamental issue.

Let’s be clear: ChatGPT and Gemini voice bots are failing us. They spread falsehoods up to 50% of the time. This isn’t just a minor flaw; it’s a fundamental issue. In contrast, Amazon's Alexa, despite its quirks, refuses to propagate lies. Why is this important? Because misinformation can ruin reputations, derail projects, and cost businesses dearly. If you ignore this, you lose time and credibility. The stakes are high. Companies relying on these unreliable bots risk falling behind. It’s time to demand accountability from AI developers. We need systems that prioritize truth over convenience. This changes the game. Don’t let your organization be a victim of these easily tricked bots. Act now, or be left in the dust.

Source:

-

ChatGPT and Gemini voice bots are easy to trick into spreading falsehoods — The Decoder (EN-US)